Website crawling and indexing are essential processes in search engine optimization (SEO) that allow search engines to discover and store website content so it can appear in search results. Without crawling and indexing, a website cannot be visible on search engines, even if the content is high quality. Search engines rely on automated programs to explore websites and collect information about pages, links, and content. After collecting this information, search engines store it in their databases so that it can be used when users search for information. Crawling and indexing form the foundation of SEO, and websites that are properly crawled and indexed have a better chance of appearing in search engine results. A well-structured and accessible website helps search engines perform these tasks more effectively and improves overall search visibility.

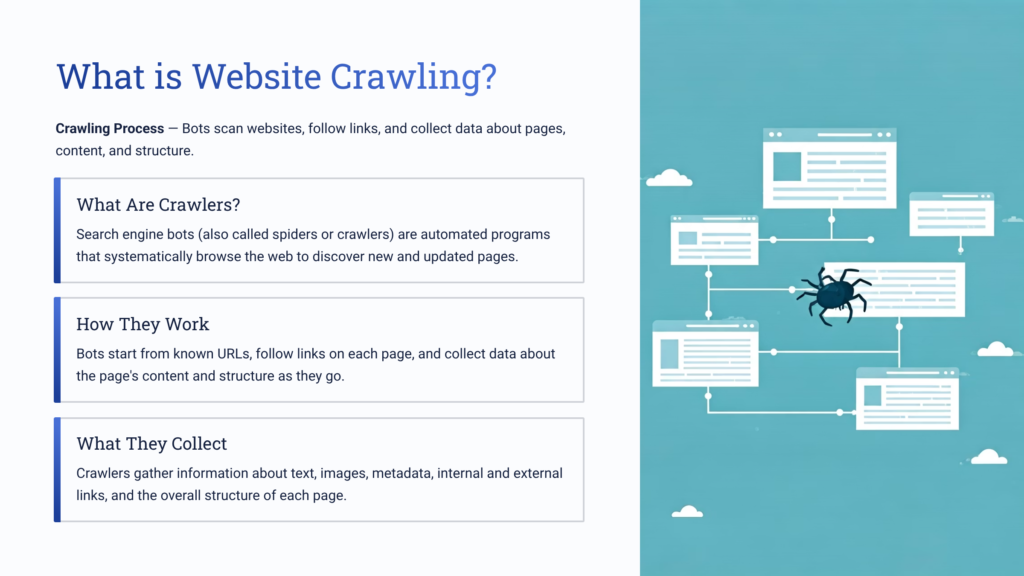

What is Website Crawling?

Website crawling is the process where search engine bots visit websites and go through pages to find content and links. These automated programs are called crawlers or spiders, and they continuously scan websites to discover new pages and updates. Crawlers move from one webpage to another by following links and collecting information about the content they find. During the crawling process, search engines analyze page content, images, and links to understand what the webpage is about.

The collected information is then sent back to the search engine for further processing. Crawling is the first step in making a webpage visible in search results because if a page is not crawled, it cannot move to the next stage of SEO. Crawling also helps search engines understand how webpages are connected and organized within a website.

What is Indexing?

Indexing happens after crawling and refers to the process of storing the information collected from a webpage into the search engine database so that it can appear in search results. After search engines crawl a website, they evaluate the collected information and decide which pages should be stored in the index. Pages that follow quality guidelines and are accessible are added to the search engine database. The search engine index works like a large library where webpages are stored and organized. When users perform searches, search engines retrieve relevant pages from this index. Pages that are not indexed cannot appear in search results, which makes indexing an essential part of SEO. Indexing allows search engines to organize information efficiently and deliver accurate search results.

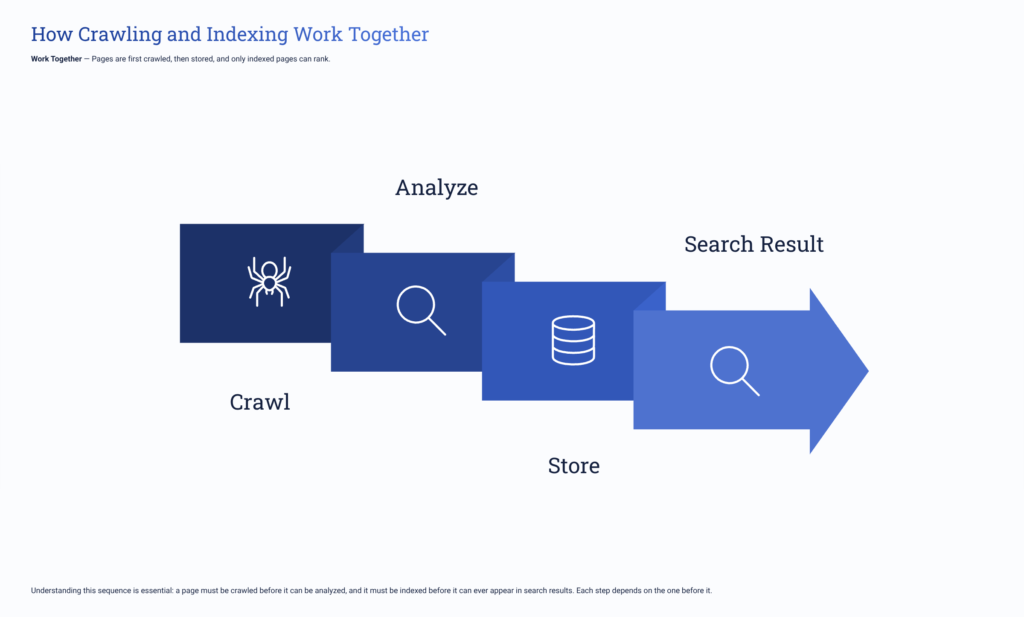

How Crawling and Indexing Work Together

Search engines first crawl a website to understand its content and structure, and during this process, bots collect information about webpages and links. After crawling is completed, search engines decide which pages should be indexed based on accessibility and quality guidelines. Pages that meet these requirements are stored in the search engine database and become eligible to appear in search results. If a page is crawled but not indexed, it will not be visible in search engine listings. Crawling and indexing work together as an ongoing process because search engines regularly revisit websites to discover new pages and updates. A proper website structure helps both crawling and indexing work more efficiently and improves search performance.

Factors Affecting Website Crawling

Several technical factors influence how effectively search engines crawl a website. A sitemap helps search engines discover webpages easily because it provides a structured list of pages that should be crawled and indexed. Sitemaps help search engines understand website structure and find new pages faster, which improves crawl efficiency. Page load speed also affects crawling because slow websites reduce crawling efficiency. Search engines prefer websites that load quickly because faster websites allow crawlers to explore more pages within a limited time. Internal links also play an important role because they connect webpages within a website and help crawlers move from one page to another. Proper internal linking helps search engines discover important pages and improves crawl coverage. The robots.txt file also affects crawling because it controls which pages search engines are allowed to crawl. Incorrect robots.txt settings may block important pages from crawling, while proper configuration helps search engines explore websites correctly.

Factors Affecting Indexing

Indexing depends on several factors that determine whether a webpage will be stored in the search engine database. Page quality is an important factor because search engines prefer pages that provide useful and valuable information. High-quality pages have a better chance of being indexed, while poor-quality pages may not be indexed. Unique content is also important because duplicate content may reduce the chances of indexing. Search engines prefer original information because it provides more value to users. Meta tags also influence indexing because they help search engines understand webpage instructions. Some meta tags allow indexing while others prevent search engines from storing the page. Accessibility is another important factor because search engines must be allowed to store webpages. Pages that are blocked cannot be indexed, while accessible pages have better indexing opportunities.

Checking Crawling and Indexing Status

Website owners can use tools such as Google Search Console to check crawling and indexing status. These tools provide information about which pages are crawled and indexed and help identify issues that prevent pages from appearing in search results. Search Console shows errors and warnings that need attention and helps website owners understand how search engines interact with their websites. Monitoring crawling and indexing regularly helps maintain website health and improves SEO performance. Regular checking helps detect problems early and allows website owners to fix them before they affect search visibility.

Fixing Crawling and Indexing Issues

Crawling and indexing issues can be fixed by improving website performance and removing technical problems. Pages should load quickly to improve crawling efficiency because faster pages allow search engines to explore websites more effectively. Proper internal linking also helps search engines discover pages easily and improves crawl coverage, which supports indexing. Technical errors and incorrect settings should be avoided because they can block search engines from accessing webpages. Pages should not be blocked by technical issues or incorrect instructions because removing such blocks improves accessibility and indexing success.

Crawling and Indexing as the Foundation of SEO

Crawling and indexing are the foundation of SEO because search engines must first discover and store webpages before ranking them. Without crawling and indexing, website content cannot appear in search results regardless of its quality. Search engines rely on proper website structure to find and understand content effectively. Websites that are accessible, fast, and well-structured perform better in search engines. Proper technical setup improves SEO results and supports long-term search visibility. Crawling and indexing create the basic structure required for search engine rankings.

Conclusion

Website crawling is the process where search engine bots visit websites and explore pages to find content and links, and these bots are called crawlers or spiders. Indexing happens after crawling and involves storing webpage information in the search engine database so that it can appear in search results. Crawling depends on factors such as sitemaps, page load speed, internal links, and robots.txt settings, while indexing depends on page quality, unique content, meta tags, and accessibility. Tools such as Google Search Console help monitor crawling and indexing status and fix related issues. Crawling and indexing are the foundation of SEO, and without them website content cannot appear in search results. A website should be accessible, fast, and well-structured to support proper crawling and indexing.